In early 2026, Anthropic rolled out 1M token context windows for Claude, removed the long-context pricing premium, and expanded media limits. Google's Gemini already offered 1M+. The message from the industry was clear: the context problem is a capacity problem, and we're fixing it with bigger windows.

Developers celebrated. No more compaction anxiety. No more losing instructions mid-session. No more cramming everything into 200K tokens. A million tokens is roughly 750,000 words. Surely that's enough.

It isn't. And the research is unambiguous about why.

What the Research Actually Shows

Chroma tested 18 frontier models across eight input lengths and found degradation at every increment. Not near the limit. At every increment. Their conclusion: a 1M-token window still degrades at 50K tokens. The decline is continuous, not a cliff.

This phenomenon has a name now: context rot. As you add tokens to an LLM's input, the quality of its output decreases. The model hasn't run out of space. The transformer architecture's attention mechanism is simply stretched thinner with every token added.

Anthropic's own engineering team describes it in their context engineering guide: models have an "attention budget" that gets depleted as context grows. Every new token introduced reduces the budget available for every other token. They compare it to human working memory: finite, and subject to diminishing returns as load increases.

The maths behind this is straightforward. Transformer attention creates pairwise relationships between every token and every other token. At 10,000 tokens, that's 100 million relationships. At 100,000 tokens, it's 10 billion. At 1 million tokens, it's 1 trillion. Softmax normalisation distributes attention across all of these. The more tokens present, the less attention each individual token receives.

A rule written in your CLAUDE.md that gets strong attention at 10K tokens of context gets 10x less attention at 100K tokens and 100x less at 1M tokens. The rule didn't change. The model's ability to attend to it did. This is one of the core reasons CLAUDE.md breaks at scale.

The "Lost in the Middle" Problem

Stanford researchers documented this in 2023 and the finding has held across every model generation since: LLMs attend well to the beginning and end of their context but poorly to the middle. Accuracy drops by 30% or more for information placed in the middle of a long context.

For coding agents, this is devastating. Your CLAUDE.md is loaded at the start (good attention). Your current conversation is at the end (good attention). Everything in between, the accumulated tool calls, file reads, search results, error messages, and previous conversation turns, occupies the middle where attention is weakest.

A critical architectural decision you discussed 40 minutes into a session sits in the middle of the context by the time you're 90 minutes in. The model doesn't "forget" it. It physically cannot attend to it as strongly when surrounded by hundreds of thousands of other tokens.

Increasing the window from 200K to 1M doesn't fix this. It makes the middle bigger. More information in the low-attention zone. More opportunities for the model to miss something important.

What Developers Are Actually Experiencing

A GitHub issue filed against Claude Code in March 2026 documented the practical impact. The developer reported that corrections made during a session were acknowledged by the model and then reverted in subsequent turns. The model agreed with the correction, continued working, and then repeated the original mistake because the correction sat in the middle of a growing context while the original (incorrect) pattern had been reinforced multiple times earlier in the session.

Their analysis: "Corrections occur late in context and compete with stale beliefs repeated early." The fix they proposed wasn't a bigger window. It was structural priority weighting: pinning corrections so they receive higher attention regardless of position.

Factory.ai, which builds AI coding agents for enterprise, describes the same phenomenon from the infrastructure side: "Larger windows do not eliminate the need for disciplined context management. Rather, they make it easier to degrade output quality without proper curation." Their solution is a context stack that progressively distils organisational knowledge into "exactly what the agent needs right now."

Latent Space's AI newsletter put it bluntly in March 2026: they're willing to bet that context windows do not meaningfully go higher than 1M in the next two years. The window size race has hit a practical ceiling. The constraint isn't compute. It's that more tokens past a certain point make things worse, not better.

The Cost Problem Nobody Talks About

Even if attention degradation were solved tomorrow, the cost of processing 1M tokens on every message is significant.

Anthropic removed the long-context pricing premium, which is good. But standard per-token pricing still applies across the full window. At Claude's current rates, a 500K token input costs meaningfully more than a 50K token input. Over dozens of messages in a session, the difference is substantial.

More importantly, the token cost includes waste. A coding agent session accumulates context that is no longer relevant: file reads from files that have since been edited, search results that led to dead ends, error messages from bugs that have been fixed, entire conversation branches about approaches that were abandoned. All of these tokens sit in the context, consuming attention budget and billing, contributing nothing to the current task.

A bigger window doesn't distinguish between useful context and noise. It just holds more of both. The agent pays attention to (and you pay money for) irrelevant tokens alongside relevant ones.

Compaction Doesn't Fix It Either

The standard response to context growth is compaction: summarise the conversation so far and start a fresh context with the summary. Claude Code does this automatically when context gets long.

Compaction helps. It's better than hitting the window limit and crashing. But it's lossy by definition. Every summarisation throws away details. Anthropic's engineering team describes their compaction as preserving "architectural decisions, unresolved bugs, and implementation details while discarding redundant tool outputs or messages." That's a best-effort heuristic, not a guarantee.

The developer who spent 20 minutes debugging a specific edge case might find that the compaction summary reduced their detailed investigation to a single line. The nuance is gone. The specific findings are gone. The next time the model encounters the same edge case, it doesn't have the detailed context from the investigation. It has a summary that says "debugged edge case in auth module."

Compaction also doesn't help with project knowledge that should persist across sessions. A compaction summary is session-scoped. It doesn't carry architectural decisions, coding patterns, or deployment history into the next session. When the session ends, the compacted summary is gone.

The Real Problem: Wrong Context, Not Insufficient Context

The framing of "we need more tokens" assumes the problem is capacity. It isn't. The problem is relevance.

A coding agent working on the CSS layer of an application doesn't need 500K tokens of context. It needs maybe 5K tokens of precisely relevant context: the CSS rules for the project, the design tokens, the component patterns, the warnings about CSS-specific gotchas, and the recent deployment history. Everything else, the database schema, the API response format, the auth implementation details, is noise that dilutes attention.

Loading 5K tokens of high-signal context into a 200K window produces better results than loading 500K tokens of mixed-relevance context into a 1M window. The model has more attention budget per relevant token. The signal-to-noise ratio is higher. The output quality is better.

This is what Anthropic means when they describe context engineering as "finding the smallest possible set of high-signal tokens that maximise the likelihood of producing the desired output." Not the most tokens. The right tokens.

Two Approaches to the Right Tokens

The industry is splitting into two camps on how to select the right tokens:

Conversational memory (semantic retrieval)

Store everything the agent has seen and done. When context is needed, embed the current query and retrieve the most semantically similar memories. This is the approach taken by Mem0, Letta, Zep, and most agent memory startups.

What it's good for: Recalling what happened in past conversations. "What did we discuss about authentication last week?" "What were the user's preferences for the report format?" (For a deeper comparison of these approaches, see The Context Engineering Problem Nobody's Solving.)

Where it falls short for coding agents: Semantic similarity is the wrong retrieval model for operational context. "Give me all high-priority rules" isn't a similarity query. "What are the deployment steps?" needs a structured procedure, not ranked memory fragments. "What are users reporting about the export feature?" needs structured feedback data, not memories of conversations where exports were mentioned.

Semantic retrieval also has a hit-or-miss quality that structured retrieval doesn't. The right memory might not surface if the current query uses different terminology than the stored memory. A rule about "never using inline styles" might not surface when the query is about "CSS best practices" because the semantic similarity isn't strong enough to rank it above other memories.

Project memory (structured retrieval)

Store project knowledge as typed, tagged, queryable entries. When context is needed, query by type, tags, scope, and priority. Load exactly what's relevant to the current task.

What it's good for: Loading operational context: the rules that govern the project, the decisions that shaped the architecture, the warnings about known gotchas, the procedures for deployment, the feedback from users, the design system constraints.

Where it excels for coding agents: Every query is deterministic. "Give me all high-priority rules" returns all entries with type=rule and priority=high. Nothing is missed because of semantic mismatch. Nothing irrelevant is included because of spurious similarity. The agent gets exactly what it asked for.

Structured retrieval is also cacheable. The same query returns the same results (until entries are added or modified), which means the model's KV-cache can reuse previous computations. The Manus team identified KV-cache hit rate as the single most important metric for production agents. Structured, stable context maximises cache hits. Semantic retrieval, which returns different results based on the current query embedding, minimises them.

What "Better Context Management" Looks Like

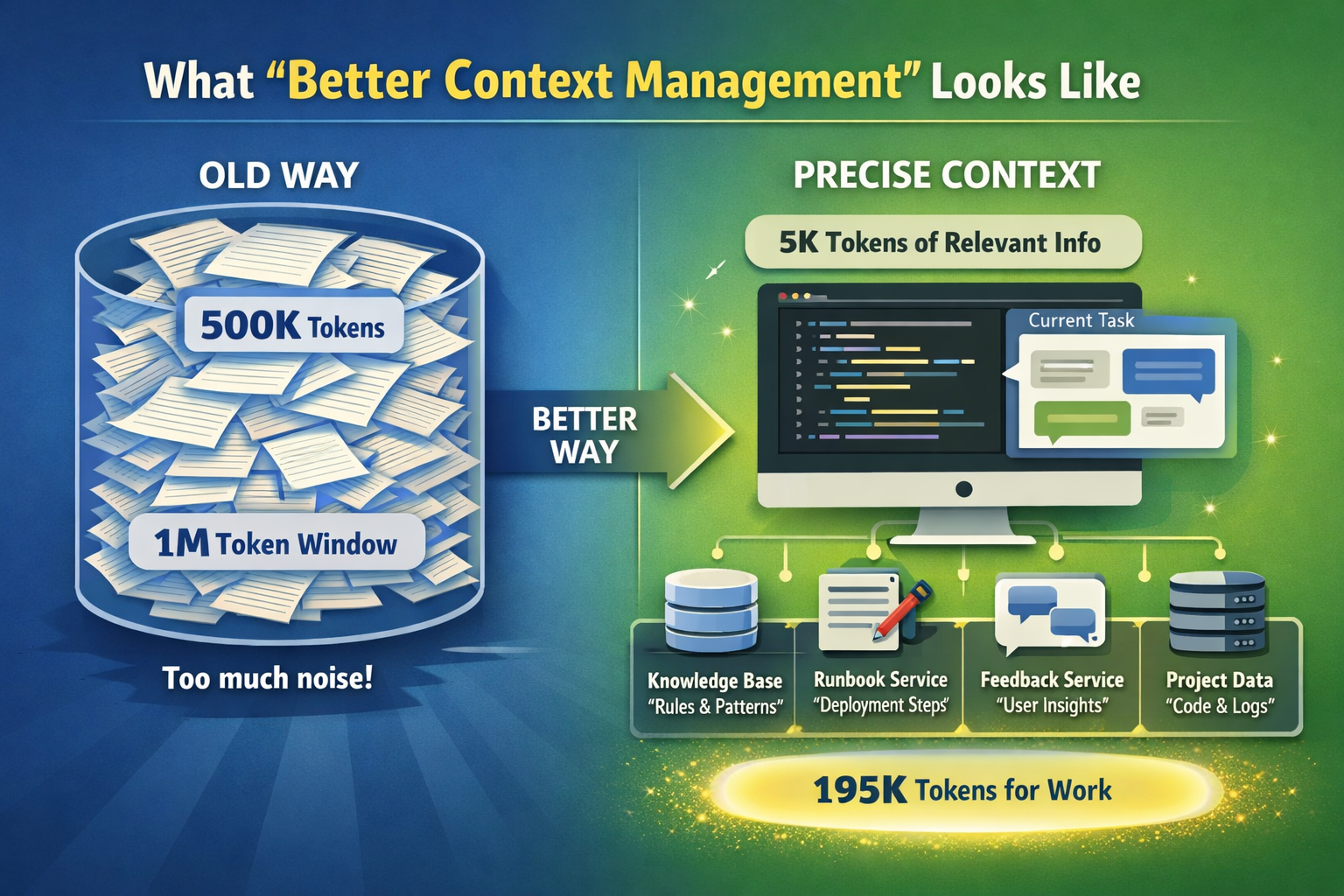

Instead of stuffing 500K tokens into a million-token window and hoping for the best:

Load 5K tokens of precisely relevant context. Query a structured knowledge base for the rules, patterns, and warnings relevant to the current task. Not everything. Not a semantic guess. Exact matches on type, tags, scope, and priority.

Keep project knowledge outside the conversation. Rules, decisions, patterns, and warnings are project knowledge, not conversation history. They belong in a persistent, queryable store that the agent loads from at session start, not in a flat file that competes with conversation tokens for attention.

Capture knowledge in the moment, retrieve it on demand. When the agent discovers a gotcha during development, store it as a typed, tagged entry. Future sessions load it only when working on the relevant area. The knowledge base grows without growing the per-session context load.

Use the context window for the current task. The window's job is to hold the current conversation, the current code, and the current problem. Project knowledge is loaded from a structured store. Deployment procedures are loaded from a runbook service. User feedback is loaded from a feedback service. The window holds the work. External services hold the knowledge.

This approach works with any context window size. A 200K window with 5K tokens of structured context and 195K tokens available for work performs better than a 1M window with 500K tokens of unstructured context and 500K tokens of noise.

The Bigger Picture

The context window size race is a red herring. It's the AI equivalent of the megapixel race in cameras: a number that's easy to market but doesn't determine the quality of the output.

What determines output quality is signal-to-noise ratio in the context. How much of what the model sees is relevant to the current task? How precisely can the model attend to the important parts? How much irrelevant content is competing for attention?

The answer to better AI coding agents isn't bigger windows. It's smarter context. Structured project memory that loads the right information at the right time. Services that give the agent operational awareness: what was deployed, what users are saying, what procedures to follow, what design constraints to respect. An infrastructure layer between the model and the work.

The models will keep getting bigger context windows. That's fine. Use the extra space for the current task, not for a bigger pile of everything the agent might conceivably need. Let external services handle the knowledge. Let the window handle the work. If you're ready to try this approach, here's how to give your AI coding agent persistent memory.

Built by Minolith. Persistent project memory for AI coding agents. Structured context, changelogs, feedback, runbooks, agent orchestration, and design systems. All via MCP.